[

If you like this blog, consider signing up for the newsletter...]

[Trigger Warning: This post is about trigger warnings]

I have taught a number of controversial topics in my time. I have taught about the ethics of sex work, the criminalisation of incest, the problems of rape and sexual assault, the permissibility of torture, the effectiveness of the death penalty, the problems of racial profiling and bias in the criminal justice system, and the natural law argument against homosexuality (to name but a few). I try to treat these topics with a degree of seriousness and detachment. I tell students that the classroom is a space for exploring these issues in a discursive and reflective manner. I also warn them to be respectful to their classmates when expressing opinions. They may not realise how the issues we discuss affect others in the class.

That said, I’ve never had an explicit policy or practice of issuing trigger warnings. To be honest, I had never even heard the term until about 2013. But from then, until roughly the end of 2015, there seemed to be an explosion of interest in the ethics of trigger warnings. Students started demanding them in a number of US universities. And opinion writers fumed and fulminated about them in newspapers and websites. A lot of heat was generated but little light. Commentators divided into pro and anti camps and became deeply entrenched in their positions.

My general sense is that the debate about trigger warnings has waned since its peak. There is certainly still a

vigorous debate about ‘coddling’ on university campuses, and a persistent desire to create ‘safe spaces’ in order to accommodate oppressed minorities, but the specific debate about trigger warnings seems (to me at any rate) to have faded into the background.

So that means now is probably a good time to reflect on its merits and see whether it casts any light on the more general debate about safe spaces and campus ‘coddling’. Fortunately there are some academic resources that help us to do this. Wendy Wyatt’s article “

The Ethics of Trigger Warnings” is a particularly useful guide to the topic and I’m going to summarise and evaluate some of its contents over the next two posts.

Wyatt’s article comes in two halves. The first half reviews the arguments of the ‘pro’ and ‘anti’ groups. The second half defends Wyatt’s own view on the ethics of trigger warnings. There is something of a disconnect between both halves. Although she promises to evaluate and engage with the arguments of the pro and anti sides presented in the first half, on my reading she doesn’t really do this in the second half. She summarises their views and then develops her own. Her position certainly builds upon and refers back to the arguments of the pro and anti sides, but she does not specifically evaluate the merits of their arguments.

I’m going to try to make up for this omission in the remainder of this post. I will review the seven ‘anti’ and four ‘pro’ arguments that she identifies in her article. I will try to formalise them into simple arguments and reveal their hidden assumptions. I will then subject them to some critical evaluation. My evaluation will be light. As you will see, the debate about trigger warnings raises lots of issues in both philosophy, psychology and sociology. It would be beyond my knowledge to fully evaluate all these issues. So, instead, I will limit myself to identifying possible weaknesses and areas requiring greater scrutiny.

Note: I write this with considerable trepidation. Dipping your toe into any campus politics debate seems to be a surefire way to attract the ire (or worship) of some. I hope to avoid both fates.

1. Seven Arguments Against Trigger Warnings

As Wyatt notes, most media commentators are sceptical (and maybe even contemptuous) of trigger warnings and the culture from which they emerge. They think that trigger warnings are counterproductive to the aims and ethos of higher education, and possibly harmful to students and society more generally. Seven objections, in particular, have been voiced by the critics.

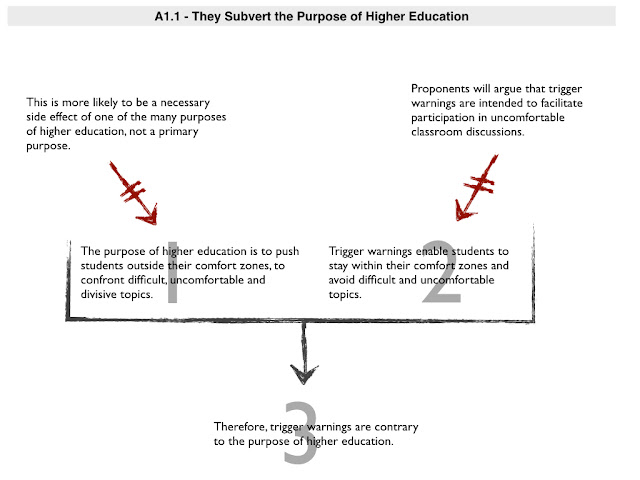

1.1 It’s contrary to the purpose of higher education

The first objection is that trigger warnings subvert the point of higher education. Higher education is supposed to challenge students, to push them outside their comfort zones, and to encourage them to confront divisive and contentious issues. Trigger warnings enable students to stay within their comfort zones and avoid divisive topics.

To put this objection in more formal terms.

- (1) The purpose of higher education is to push students outside their comfort zones, to confront difficult, uncomfortable and divisive topics.

- (2) Trigger warnings enable students to stay within their comfort zones and avoid difficult, uncomfortable and divisive topics.

- (3) Therefore, trigger warnings are contrary to the purpose of higher education.

I’m inclined to accept premise (1), with some caveats. The major caveat is that I doubt that this is the primary or sole purpose of higher education. I suspect it is really only a secondary consequence of one of many purposes of higher education. In other words, I’m not convinced that confronting difficult and uncomfortable topics is an end-in-itself for higher education. I think the primary purposes of higher education are plural — teaching students important skills, imparting important knowledge, encouraging them to be critical and self-reflective, preparing them for employment etc. — and that in many instances achieving these purposes will require them to confront difficult or uncomfortable topics. I cannot imagine teaching an ethics course, for instance, without doing this. But some subjects — e.g. physics or advanced mathematics — seem to me like they would not necessarily require students to confront uncomfortable or divisive topics (although I guess certain aspects of physics could be uncomfortable to those with contrary religious beliefs). On top of this, I don’t think anyone want students to feel uncomfortable just for the sake of it. I think this is unavoidable if they are going to deal with certain topics.

The second premise strikes me as being more problematic. Most supporters of trigger warnings will argue that their purpose is not to enable students to avoid uncomfortable topics but rather to enable them to prepare to engage with such topics. Thus, students are not supposed to use trigger warnings as an excuse to avoid learning outcomes, but as a tool to facilitate better participation in the activities that lead to those outcomes. This response strikes me as plausible when it comes to stating the intention behind their use, but I think some caution is needed. There is a danger that trigger warnings foster ‘respect creep’, whereby they start off as a tool for enabling participation but end up as an excuse to avoid participation due to the need to respect the vulnerabilities of the students in question. This ‘slippery slope’ style concern becomes something of a theme in the remainder of this post.

1.2 They threaten free speech and academic freedom

The second objection to trigger warnings focuses on the value of free speech and academic freedom, and suggests that these warnings undermine those values because they are tantamount to a form of censorship. In argumentative form:

- (4) Free speech and academic freedom are important values and are undermined by censorship.

- (5) Trigger warnings censor the expression of certain ideas.

- (6) Therefore, trigger warnings undermine free speech and academic freedom.

I don’t think this is a good objection to trigger warnings. I’d probably be willing to grant premise (4) for the sake of argument, although I should enter some objections. I am not a free speech absolutist. It seems obvious to me that certain forms of speech are properly regulated and censored (e.g. fraud and coercive threats). The key question is whether there is an appropriate and trustworthy censor. Oftentimes there is not. Brian Leiter’s paper

‘The Case Against Free Speech’ outlines a position on this with which I am sympathetic. That said, I do think free speech and academic freedom are important values in universities.

The bigger issue is with premise (5). Trigger warnings do not seem to me to amount to censorship. Their original intention is not to prevent the expression of ideas. Their intention is to facilitate student engagement with ideas in the classroom: you give the trigger warning and

then express the controversial idea or opinion. Furthermore, their relevance is to the classroom environment alone, where special obligations exist between the teacher and his/her students, which may justify some reduction in speech protection. Free speech and academic freedom relate more to the university and academic life as a whole, not to what happens in the classroom. To that extent, other manifestations of campus ‘coddling’ such as ‘no platforming’ or the desire to make the entire university a ‘safe space’ are greater threats to free speech and academic freedom. If trigger warnings are a slippery slope to those actions, then there may be reason to oppose them on these grounds. But if you can block the slippery slope, there is less reason to be concerned.

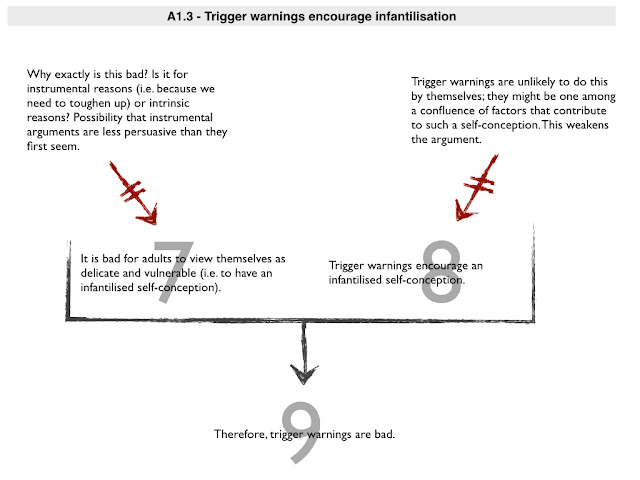

1.3 - Warnings encourage infantilisation

The third objection focuses on both the character-building function of education and the need to treat students as adults. The idea is that trigger warnings encourage students to see themselves as delicate, vulnerable, infantilised adults — always at the risk of being traumatised by reality — and that this is a bad thing. In argumentative form:

- (7) It is bad for adults to view themselves as delicate and vulnerable (i.e. to have an infantilised self-conception).

- (8) Trigger warnings encourage an infantilised self-conception.

- (9) Therefore, trigger warnings are bad.

As someone who is undoubtedly an infantilised adult, I find it difficult to fully embrace premise (7). Being infant-like in certain respects seems like a good thing (e.g. maintaining a child-like sense of wonder or curiosity). Of course, this argument is specifically targeted at the vulnerable aspects of infant-likeness. I’m more sympathetic to the notion that this is bad. But I often wonder why it is bad. Is it bad for instrumental reasons? If you view yourself as a perpetual potential victim will you have a tougher time in life? The real-world — we are often told — won’t be sympathetic to our vulnerabilities. We need to toughen up. I’m sceptical of this kind of instrumental argument. I’m not convinced that the ‘real world’ is necessarily unsympathetic to individual vulnerabilities. I think we are increasingly building a culture in which vulnerabilities are protected and insulated, well beyond the walls of the university (although it is, admittedly, difficult to see that at this moment in our political history). I think it makes far more sense to argue that viewing oneself as a perpetual potential victim is intrinsically bad (maybe because it denies or suppresses agency/autonomy).

Anyway, if we grant premise (7), we are still left with something of a hurdle to clear in relation to premise (8). The problem is that the causal connection between trigger warnings and an infantilised self-conception is indirect. There is no reason to think being exposed to a trigger warning necessarily causes this. It’s more plausible to suppose that trigger warnings, as part of a general confluence of factors favouring infantilisation, have this effect. But if the causal effect is more subtle and indirect, the specific argument against trigger warnings is weaker.

1.4 - The Impossibility of Anticipation

The fourth objection to trigger warnings is that it is impossible to anticipate someone’s triggers. There may be some relatively clearcut cases. If I showed a video of an ISIS beheading it would probably be remiss of me not to issue some trigger warning. I can be pretty confident that this type of content would be traumatising. But beyond the paradigmatic cases, there is much more uncertainty. Sufferers of PTSD can be triggered by the strangest and least predictable things. You end up then with an odd result: everything is potentially triggering and so blanket warnings need to be issued. But blanket warnings are unlikely to be effective as they provide no specific guidance and are likely to be ignored or overlooked.

To put this more formally:

- (10) If it is impossible to anticipate every trigger, then blanket trigger warnings will need to be given for all course material, no matter how innocuous it may seem.

- (11) It is impossible to anticipate every trigger.

- (12) Therefore, blanket trigger warnings need to be given.

- (13) If blanket trigger warnings need to be given, they are unlikely to be effective.

- (14) Therefore, trigger warnings are unlikely to be effective.

This strikes me as being a better critique of trigger warnings. From what I have read, premise (11) does appear to be true. Victims of PTSD sometimes claim that their triggers are odd and unpredictable. And there is something of a reductio involved in giving blanket trigger warnings. If that’s what you are doing, it would seem that the warning is not so much about helping and respecting students with vulnerabilities but rather about social signalling.

That said, even though I think this is a better critique, I have some concerns about premises (10) and (13). I think someone might resist the slide from the impossibility of perfect anticipation to the need for blanket warnings by limiting themselves to the paradigm cases. I also think blanket warnings might be effective for some, even if they are useless for most.

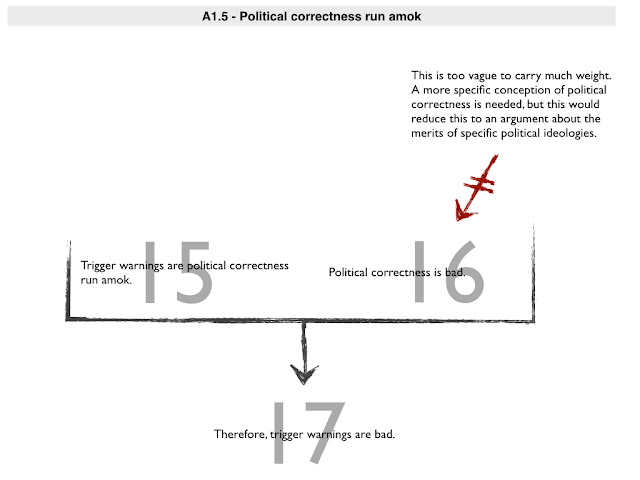

1.5 Political Correctness Run Amok

A fifth objection to trigger warnings is that they are yet another symptom of ‘political correctness’ run amok. Wyatt cites several commentators making this claim, often supplemented by some critique of an associated ideological agenda (e.g. social justice, feminism). It’s difficult to know what to make of this objection because it is usually defended in enthymematic form, i.e. with its underlying normative principle implied rather than expressed. If we make that normative principle explicit, problems emerge.

The argument must be something like the following:

- (15) Trigger warnings are political correctness run amok.

- (16) Political correctness is bad.

- (17) Therefore, trigger warnings are bad.

Let’s grant (15) for the sake of argument. That leaves us with (16). My problem with this is simple. ‘Political correctness’ is just way too vague for me to know what to make of this argument. You would need to have a more specific conception of political correctness for the argument to have any weight. But if you make it more specific you probably reduce the debate to one about a specific political agenda (such as feminism and/or social justice - both of which are also vague). It is going to be very difficult to evaluate such an agenda in a comprehensive way and the argument is consequently going to remain highly contested.

For what it is worth, I think certain manifestations of political correctness are morally sensible and probably commendable (e.g. condemning the use of racial slurs), and others are more silly and counterproductive. I suspect the same is true for trigger warnings: some (such as the hypothetical warning one might give before displaying an ISIS beheading video) are morally sensible and others less so.

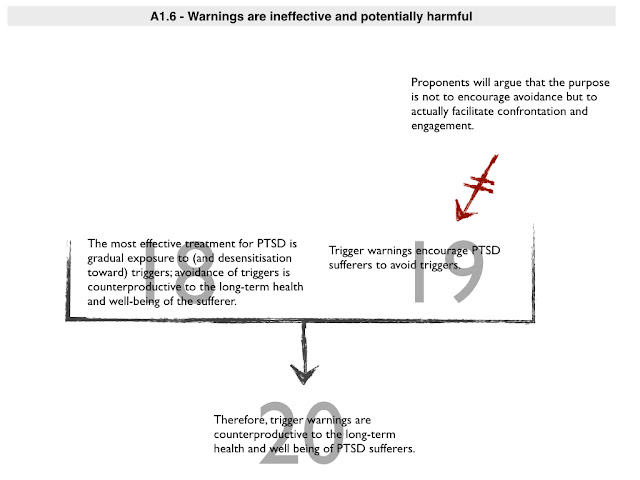

1.6 - Trigger Warnings are Ineffective and Possibly Harmful

A sixth objection focuses on the origins of trigger warnings in relation to PTSD. The originally conceived purpose for trigger warnings was to help prevent distress amongst those suffering from some sort of post-traumatic stress disorder. But opponents argue that trigger warnings encourage people to avoid triggers and this is contrary to the long-term health and well-being of PTSD sufferers. Gradual exposure to and desensitisation toward triggers is the more effective treatment.

I would state this argument like this:

- (18) The most effective treatment for PTSD is gradual exposure to (and desensitisation toward) triggers; avoidance of triggers is counterproductive to the long-term health and well-being of the sufferer.

- (19) Trigger warnings encourage PTSD sufferers to avoid triggers.

- (20) Therefore, trigger warnings are counterproductive to the long-term health and well being of PTSD sufferers.

A few points about this argument. First, as noted above, most proponents of trigger warnings will resist premise (19). They will argue that the purpose is not to encourage avoidance but to enable participation. But there may well be a gap between intended purpose and effect: trigger warnings may not be intended to encourage avoidance but they end up working that way. Second, and more importantly, I think this argument does help make a significant point. There is a danger that professors and lecturers think they are helping their students by issuing trigger warnings, and that this is all they need to do to help sufferers of PTSD, but this may not be the case if they are simply fostering avoidance. There is, consequently, a danger that in embracing trigger warnings professors will wash their hand of other pastoral duties they may owe to their students.

Finally, I question the assumption underlying this argument, namely: that trigger warnings are about helping sufferers of PTSD. That might have been true originally but I suspect nowadays that trigger warnings have evolved into being about something else. In particular, I think they have probably evolved into a social signalling tool. They say to students ‘you are welcome here’ or ‘I share a certain set of values and assumptions with you’ and so on. Some might view those signals positively; some might view them negatively.

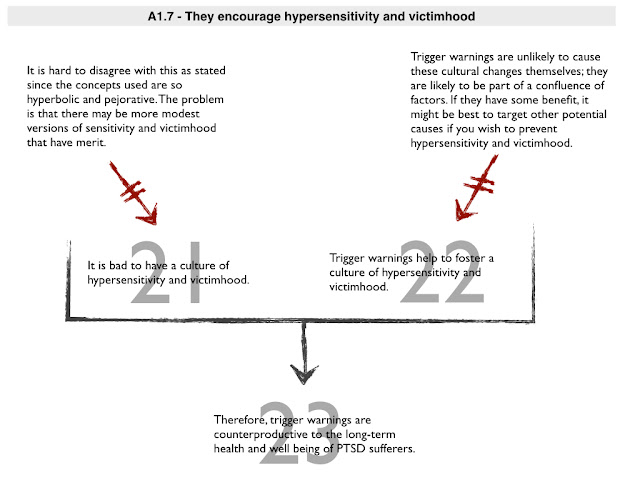

1.7 - Society at risk argument

The final objection is the most general. It claims that trigger warnings have negative consequences for society at large as they help to breed a culture of hypersensitivity and victimhood.

- (21) It is bad to have a culture of hypersensitivity and victimhood.

- (22) Trigger warnings help to foster a culture of hypersensitivity and victimhood.

- (23) Therefore, trigger warnings are damaging to society.

Premise (21) sounds plausible, but I suspect that is because the terms used within it are deliberately hyperbolic and pejorative. A culture of victimhood probably is a bad thing, but that doesn’t mean that there aren’t genuine victims who need our respect and assistance. Likewise, ‘hyper’-sensitivity is obviously excessive, but surely some degree of sensitivity is a good thing? Proponents of trigger warnings are likely to reframe the societal consequences as a positive. Where a critic sees hypersensitivity and victimhood, they will see tolerance, respect and care. It’s difficult to say who is right in the abstract. I’m certainly concerned about the slide toward hypersensitivity and victimhood, but I struggle sometimes to rationalise my concern.

Premise (22) also seems problematic for reasons stated previously. Trigger warnings are unlikely to cause these negative effects in and of themselves. Their effect, rather, will be part of a general confluence of factors. This makes it difficult to weight this argument. If there are some benefits to trigger warnings, there may be reason encourage them even if they contribute to a culture of hypersensitivity. It might be better to target the other factors that contribute to such a culture.

1.8 - Interim Summary

Before we proceed to address the positive arguments, let’s quickly get our bearings. My general sense from this analysis is that some objections to trigger warnings (e.g. the impossibility of anticipation objection) are better than others (e.g. the political correctness objection). I also think it’s clear that many of the objections to trigger warnings work on the assumption that they will not function as originally intended and will have dangerous spillover effects. If they functioned purely as intended — as a way to facilitate rather than discourage classroom participation — they would be relatively innocuous.

2. Four Arguments in Favour of Trigger Warnings

You might think it is unnecessary to go through the arguments in favour of trigger warnings at this stage. After all, some of them were implicit in the analysis of the arguments we just reviewed. Nevertheless, some engagement with the positive argument is worthwhile, if only because it will change one’s perspective on the debate. Fortunately, we can be briefer in this discussion since much of the relevant territory has already been covered.

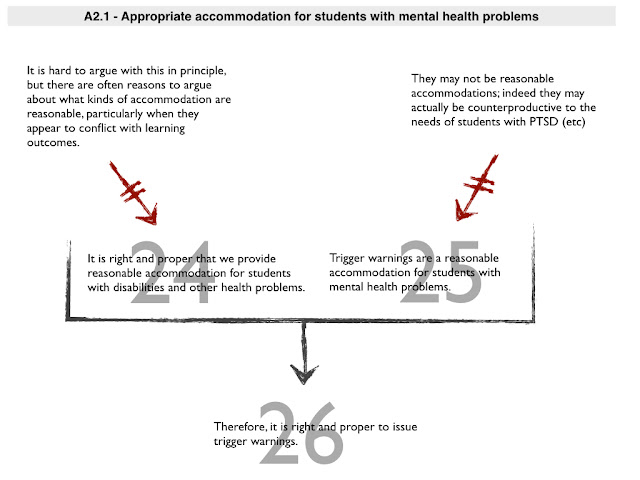

2.1 - Appropriate accommodation for students with mental illnesses

The first argument in favour of trigger warnings is that they are an appropriate way to accommodate students with mental health problems. This is part of the original rationale for trigger warnings and seems like the most sensible argument in their favour. After all, we make reasonable accommodations for students with health problems or disabilities all the time.

- (24) It is right and proper that we provide reasonable accommodation for students with disabilities and other health problems.

- (25) Trigger warnings are a reasonable accommodation for students with mental health problems.

- (26) Therefore, it is right and proper to issue trigger warnings.

Premise (24) is relatively unobjectionable. It is a widely accepted principle of equality law in many jurisdictions. The difficulty is in determining what is ‘reasonable’. Anyone who has dealt with a university disability service will have some sense of the difficulties that can arise. For example, I like to assess students’ public speaking in some of my classes but I have frequently been told that students with anxiety disorders cannot be compelled to undertake this form of assignment. I have issues with this since I think developing public speaking skills is important, but I often take the path of least resistance and don’t kick up a fuss (and, in any event, many students with anxiety disorders tell me they are happy to take the tests since they would like to develop these skills themselves).

Premise (25) is the more contentious one. Defenders of trigger warnings will claim that offering a trigger warning is like warning someone with epilepsy that strobe lights will be used in a performance. They are only for those with mental health problems and can be easily ignored by everyone else. But as we saw above, opponents will argue that things are not so simple. Trigger warnings, they say, may encourage all students to think they have mental health problems (that they are more vulnerable than they really are or should be). They may also argue that trigger warnings will not be very effective forms of reasonable accommodation. As per argument 1.6, they may argue that they will be counterproductive for true sufferers of PTSD.

2.2 - Trigger warnings as small acts of empathy that minimise harm

The second argument is somewhat similar to the first. Where the first argument focused specifically on obligations to those with disabilities and mental health problems, the second argument focuses on general principles of decency and humanity. The idea is that some people are genuinely vulnerable and we owe it to them to make the classroom as welcoming a place as possible. Trigger warnings allow us to do that.

- (27) It is right and proper to treat others (students in particular) with respect, decency and tolerance.

- (28) Trigger warnings allow us to treat students with respect, decency and tolerance.

- (29) Therefore, it is right and proper to issue trigger warnings.

The difficulties with this argument will be familiar by now. I don’t think anyone would deny that we ought to treat others with respect and decency. What they might deny is whether this is a paramount or primary duty. Maybe, as educators, our primary duty is to the truth not to tolerance and respect? They may also deny whether trigger warnings are they best way to show respect and decency. Many will favour a ‘you have to be cruel to be kind’ mentality, which argues that being overly deferential to a student’s perceived vulnerabilities will be counterproductive to their long-term success and well-being.

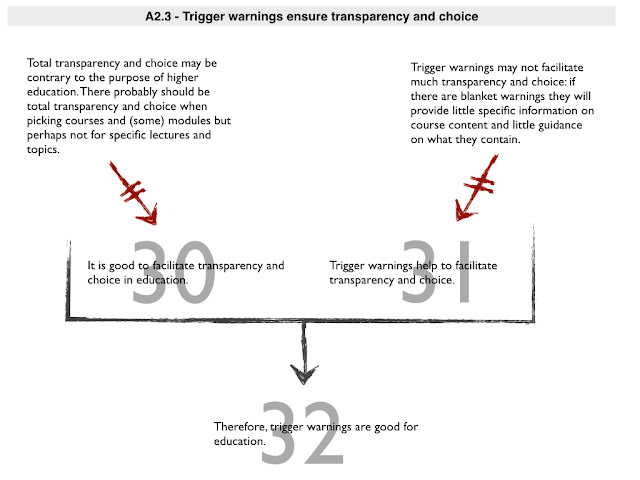

2.3 - Trigger warnings ensure transparency

The third argument in favour of trigger warnings is different from the preceding two. Where they focused on our duties to the more vulnerable students, this one focuses on making the classroom better for all, irrespective of their underlying psychological makeup. The idea is that giving trigger warnings facilitates transparency and choice: it allows students to know what they are going to face on a given course and allows them to choose when to face potentially disturbing or traumatic material.

- (30) It is good to facilitate transparency and choice in education.

- (31) Trigger warnings help to facilitate transparency and choice.

- (32) Therefore, trigger warnings are good for education.

Premise (30) is bound to raise the hackles of some academics. Transparency and choice may sound unobjectionable at first glance — there are many ways in which universities are keen to promote both — but I also know many professors and lecturers who think there are limits to this. They will argue that education is, ultimately, premised on an asymmetrical relationship: the teacher should know more (and better) than the students about some things, particularly about the content students ought to be taught. Students cannot determine everything about their education. There are legitimate compulsory college classes — subjects deemed important for all students taking a particular course — and there is a widespread belief that teachers should get to determine what is pedagogically appropriate material for their classes. So premise (30) is unlikely to be embraced in an unqualified form.

Fortunately, that may not matter. It may be possible for premise (31) to work with a more qualified form of premise (30). After all, its not like trigger warnings need to facilitate complete transparency and choice. Indeed, if we are reduced to issuing blanket trigger warnings there may be very little transparency involved. And if trigger warnings are issued in course catalogues or module descriptions — and not for individual lectures or module topics — then choice could be facilitated at the point of entry into a class without compromising an individual lecturer's ability to determine course content.

On top of this, the kinds of concerns I list here assume that students are being enabled to avoid or drop out of classes or topics they find objectionable. If trigger warnings function as most of their proponents intend — as ways to facilitate rather than discourage participation — these concerns may not be well-founded.

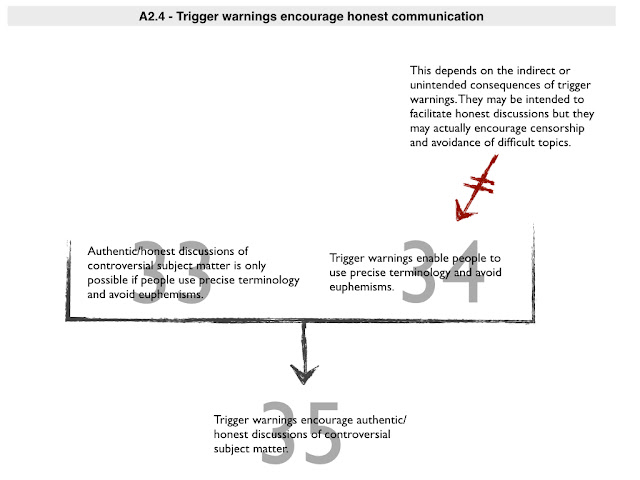

2.4 - Trigger warnings foster more authentic and honest discussion

The final argument in favour of trigger warnings turns the typical objection to them on its head. Many people associate trigger warnings with political correctness, censorship and the suppression of authentic and honest discussion. Professors are allegedly unable to confront important issues because they have to kowtow to the vulnerabilities of their students. But what if the opposite is true? What if trigger warnings actually enable a more honest confrontation with the truth?

The argument might work like this:

- (33) Authentic/honest discussions of controversial subject matter is only possible if people use precise terminology and avoid euphemisms.

- (34) Trigger warnings enable people to use precise terminology and avoid euphemisms.

- (35) Trigger warnings encourage authentic/honest discussions of controversial subject matter.

I think this is a pretty interesting argument. It again derives its force from the intended purpose of trigger warnings. If they don’t simply function as an excuse for people to avoid difficult subject matter and instead facilitate participation in classroom discussions, they may also help to foster a more honest dialogue. If you give the trigger warnings up front, you now have some justification for using exact terms in your discussions of violence, sexual abuse and racism, instead of hiding behind euphemisms or ignoring the topics entirely. People have been properly warned that they may encounter disturbing material so why not use this to actually discuss disturbing material?

Whether this argument works, of course, depends on the indirect and unintended consequences of trigger warnings. As noted several times already, they may not facilitate participation as intended, they simply facilitate avoidance. And they may form part of a general confluence of factors that limits honest discussion on the campus.

3. Conclusion

That brings us to the end of this post. Hopefully this review of the arguments has been useful. I haven't come down decisively in favour of one point of view here. I'm somewhat conflicted myself. I'm probably constitutionally or dispositionally inclined toward the anti-view, but I think many of the argument in favour of that view are less persuasive than they first appear. They do not focus on the primary intended effects of trigger warnings. Instead they worry about things that are far more difficult to assess, like the long-term or downstream consequences of a trigger warning culture. That said, the arguments in favour of trigger warnings have many weakspots too. They may have good intentions lying behind them but there isn't strong evidence to suggest that they work as intended (there are anecdotes of course) and they may encourage a degree of complacency that is counterproductive to their intended aims.

As I said at the outset, this review of arguments only covers the first half of Wyatt's article. Her main goal was not to evaluate each of these arguments but to defend her own take on the use of trigger warnings. I'll look at that in a future post.