(Part One)

This post is the second in a brief series looking at Nicholas Agar’s Searlian Wager argument. The argument is a response to Ray Kurzweil’s claim that we should upload our minds to some electronic medium in order to experience the full benefits of the law of accelerating returns. If that means nothing to you, read part one.

The crucial premise in the Searlian Wager argument concerns the costs and benefits of uploading your mind versus the costs and benefits of not uploading your mind. To be precise, the crucial premise says that the expected payoff of uploading your mind is less than the expected payoff of not uploading your mind. Thus, it would not be rational to upload your mind.

In this post I want outline Agar’s defence of the crucial premise.

1. Agar’s Strategy

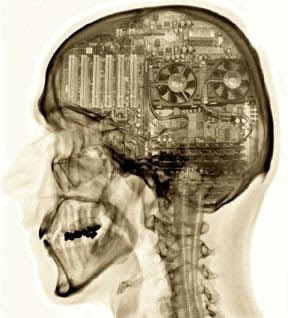

The following is the game tree representing Searle’s Wager. It depicts the four outcomes that arise from our choice of whether to upload or not under the two possible conditions (Strong AI or Weak AI).

The initial force of the Searlian Wager derives from recognising the possibility that Weak AI is true. For if Weak AI is true, the act of uploading would effectively amount to an act of self-destruction. But recognising the possibility that Weak AI is true is not enough to support the argument. Expected utility calculations can often have strange and counterintuitive results. To know what we should really do, we have to know whether the following inequality really holds (numbering follows part one):

- (6) Eu(~U) > Eu(U)

But there’s a problem: we have no figures to plug into the relevant equations, and even if we did come up with figures, people would probably dispute them (“You’re underestimating the benefits of uploading”, “You’re underestimating the costs of uploading” etc. etc.). So what can we do? Agar employs an interesting strategy. He reckons that if he can show that the following two propositions hold true, he can defend (6).

- (8) Death (outcome c) is much worse for those considering to upload than living (outcome b or d).

- (9) Uploading and surviving (a) is not much better, and possibly worse, than not uploading and living (b or d).

As I say, this strategy is interesting. While I know that it is effective for a certain range of values (I checked), it is beyond my own mathematical competence to prove that it is generally effective (i.e. true for all values of a, b, c, and d, and all probabilities p and 1-p, that satisfy the conditions set down in 8 and 9). If anyone is comfortable trying to prove this kind of thing, I’d be interested in hearing what they have to say.

In the meantime, I’ll continue to spell out how Agar defends (8) and (9).

2. A Fate Worse than Death?

On the face of it, (8) seems to be obviously false. There would appear to be contexts in which the risk of self-destruction does not outweigh the potential benefit (however improbable) of continued existence. Such a context is often exploited by the purveyors of cryonics. It looks something like this:

You have recently been diagnosed with a terminal illness. The doctors say you’ve got six months to live, tops. They tell you to go home, get your house in order, and prepare to die. But you’re having none of it. You recently read some adverts for a cryonics company in California. For a fee, they will freeze your disease-ridden body (or just the brain!) to a cool -196 C and keep it in storage with instructions that it only be thawed out at such a time when a cure for your illness has been found. What a great idea, you think to yourself. Since you’re going to die anyway, why not take the chance (make the bet) that they’ll be able to resuscitate and cure you in the future? After all, you’ve got nothing to lose.

This is a persuasive argument. Agar concedes as much. But he thinks the wager facing our potential uploader is going to be crucially different from that facing the cryonics patient. The uploader will not face the choice between certain death, on the one hand, and possible death/possible survival, on the other. No; the uploader will face the choice between continued biological existence with biological enhancements, on the one hand, and possible death/possible survival (with electronic enhancements), on the other.

The reason has to do with the kinds of technological wonders we can expect to have developed by the time we figure out how to upload our minds. Agar reckons we can expect such wonders to allow for the indefinite continuance of biological existence. To support his point, he appeals to the ideas of Aubrey de Grey. de Grey thinks that -- given appropriate funding -- medical technologies could soon help us to achieve longevity escape velocity (LEV). This is when new anti-aging therapies consistently add years to our life expectancies faster than age consumes them.

If we do achieve LEV, and we do so before we achieve uploadability, then premise (8) would seem defensible. Note that this argument does not actually require LEV to be highly probable. It only requires it to be relatively more probable than the combination of uploadability and Strong AI.

3. Don’t you want Wikipedia on the Brain?

Premise (9) is a little trickier. It proposes that the benefits of continued biological existence are not much worse (and possibly better) than the benefits of Kurweil-ian uploading. How can this be defended? Agar provides us with two reasons.

The first relates to the disconnect between our subjective perception of value and the objective reality. Agar points to findings in experimental economics that suggest we have a non-linear appreciation of value. I’ll just quote him directly since he explains the point pretty well:

For most of us, a prize of $100,000,000 is not 100 times better than one of $1,000,000. We would not trade a ticket in a lottery offering a one-in-ten chance of winning $1,000,000 for one that offers a one-in-a-thousand chance of winning $100,000,000, even when informed that both tickets yield an expected return of $100,000....We have no difficulty in recognizing the bigger prize as better than the smaller one. But we don’t prefer it to the extent that it’s objectively...The conversion of objective monetary values into subjective benefits reveals the one-in-ten chance at $1,000,000 to be significantly better than the one-in-a-thousand chance at $100,000,000 (pp. 68-69).

How do these quirks of subjective value affect the wager argument? Well, the idea is that continued biological existence with LEV is akin to the one-in-ten chance of $1,000,000, while uploading is akin to the one-in-a-thousand chance of $100,000,000: people are going to prefer the former to the latter, even if the latter might yield the same (or even a higher) payoff.

I have two concerns about this. First, my original formulation of the wager argument relied on the straightforward expected-utility-maximisation-principle of rational choice. But by appealing to the risks associated with the respective wagers, Agar would seem to be incorporating some element of risk aversion into his preferred rationality principle. This would force a revision of the original argument (premise 5 in particular), albeit one that works in Agar’s favour. Second, the use of subjective valuations might affect our interpretation of the argument. In particular it raises the question: Is Agar saying that this is how people will in fact react to the uploading decision, or is he saying that this is how they should react to the decision?

Agar’s second line of defence for premise (9) concerns species-relative values and claims that converting ourselves into electronic beings will result in the loss of experiences and motivations that are highly valuable. Here, at last, we get a whisper of Agar’s main argument, but alas it remains a whisper. He promises to elaborate further in chapter nine.

4. Conclusion

This concludes Agar’s main defence of the Searlian Wager argument. The implication of the argument is simple: the greater certainty attached to continued biological existence will make it the more attractive option. As a result, it will never be rational to upload our minds.

Following on from his main defence, Agar looks at the possibility of testing to see whether uploading preserves conscious experience before deciding to fully upload ourselves. This could reduce the uncertainty associated with the wager and thus make uploading the rational choice. Agar thinks any proposed experiments are unlikely to prove what we would like them to prove. The uncertainty would seem to be at the heart of the hard problem of consciousness.

Finally, Agar also discusses, at the end of chapter four, the problem of unfriendly AI and the dangers associated with creating electronic copies of yourself. I won’t discuss these issues here. Enough food for thought should have been provided by the wager argument itself.

No comments:

Post a Comment